Thinking Fast, Slow, or Not At All: What “Cognitive Surrender” means for your business | Matt Gaskell

body

A new study from Wharton puts a name to the thing that should worry you most about AI adoption. It’s not hallucinations or bias. It’s your team stopping thinking altogether.

I’ve written before about the immediate risks of AI in business not being Skynet, but copy-paste, how fixing things with your hands can rewire your brain for better leadership, and about the cognitive fatigue that comes from relentless decision-making without recovery.

I was (I promise) thinking this week about how to break out of System 1 thinking when people are becoming more reliant on AI, when this week (I promise) I saw a recent paper from the Wharton School by Steven Shaw and Gideon Nave that ties all of these threads together, and gives the phenomenon a name that every leader needs to understand: cognitive surrender.

System 3: You already have it, whether you like it or not

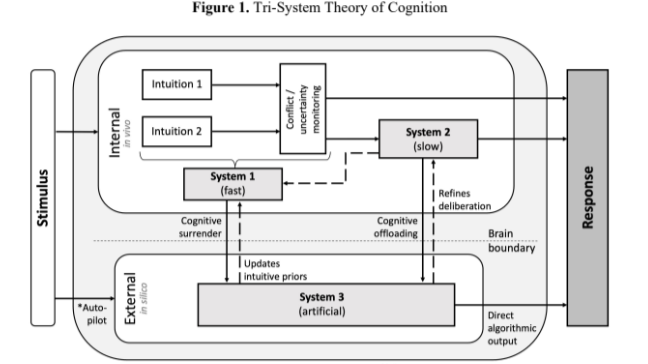

Most people in business have at least a familiarity with Daniel Kahneman’s dual-process model of thinking. System 1 is fast, intuitive, and automatic. System 2 is slow, deliberative, and analytical. Shaw and Nave’s “Tri-System Theory” adds a third: System 3 – artificial cognition. External, algorithmic reasoning that now operates alongside (and increasingly in place of) your own thinking.

The striking part is that System 3 isn’t something you deploy. It’s something your people (and likely you and me) are already using for work. Right now. On phones, on laptops, in browser tabs you can’t see. As I wrote in my piece on the CISA Director uploading classified documents into ChatGPT: you have AI in your organisation, whether you have deployed it or not. But are you also losing cognitive processing power from your organisation?

System 3 isn’t a tool. It’s a cognitive agent. And the question isn’t whether your team is using it, but whether they are thinking alongside it or surrendering to it.

The study: What happens when AI is wrong, and people follow it anyway

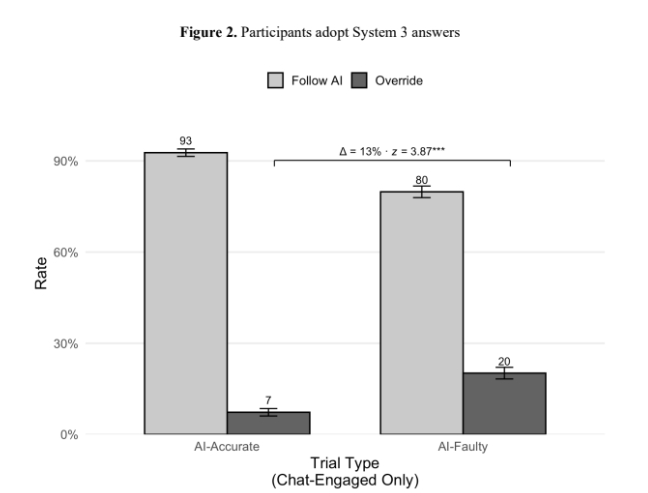

The researchers ran a clever experiment across 1,372 participants. They gave people reasoning problems (an adapted Cognitive Reflection Test) with and without access to an AI chatbot. The twist: they secretly manipulated whether the AI gave correct or incorrect answers.

The findings are maybe unsurprising, but also uncomfortable…

1. People used the AI on over half of all trials. When it was available, they reached for it. This isn’t surprising I think. And it's great news for AI hyperscalers.

2. When the AI was right, accuracy jumped by 25 percentage points compared to working alone. Great news for AI advocates. And it's still great news for AI hypercompanies.

3. When the AI was wrong, accuracy dropped by 15 percentage points below their baseline. That is: people performed worse than if they’d had no AI at all. They didn’t just fail to catch the error – they actively adopted it. Again, not surprising … but not good. Still OK for hyperscalers. Not great for your business.

4. On faulty AI trials where people consulted the chatbot, they followed the wrong answer roughly 80% of the time. That’s right. Four out of five. Still OK for hyperscalers. Definitely bad for business.

5. Confidence went up across the board – even when the AI was feeding them wrong answers. People felt more sure of themselves, not less. This is... problematic.

That last point is one to think about. It’s not just that people make worse decisions with bad AI (it’s the same as getting bad advice from anyone). It’s that they make worse decisions and feel better about them, and the advice machine is ready to go again. The feedback loop is broken.

Cognitive surrender is not just following bad advice. It’s following bad advice with increased confidence. The feedback loop between effort and outcome is severed.

This is the cognitive offloading problem, on steroids

I’ve written about cognitive offloading before – the idea that we hand tasks to tools (a calculator, a GPS, a search engine) to free up mental capacity for higher order thinking. The researchers make a critical distinction here that I think matters enormously for how we think about AI in business.

Cognitive offloading is strategic. You delegate a discrete task to a tool. You’re still in control. You’re still thinking. The calculator does the maths so you can focus on the problem. The spreadsheet shows you the numbers so you can think about them.

Cognitive surrender is different. You adopt the AI’s output, without verification, without critical evaluation. The tool doesn’t assist your reasoning – it replaces it. And you might not even notice it’s happened.

In my “Art of Everything Maintenance” piece, I wrote about the risk of cognitive debt from over-relying on tools – specifically the Law of the Instrument: when your only tool is a hammer, every problem looks like a nail. This research shows the problem is potentially worse. It’s not just that we reach for the wrong tool. It’s that we disengage our brain altogether.

Who surrenders? (Spoiler: it’s predictable)

The study found clear individual differences in who is most prone to cognitive surrender:

1. Higher trust in AI = more surrender. People who trust AI more were more likely to use the chatbot, more likely to follow faulty answers, and less accurate as a result.

2. Lower “need for cognition” = more surrender. People who are less inclined to engage in effortful thinking were more vulnerable.

3. Lower fluid intelligence = more surrender. People with lower reasoning ability were less likely to catch and override incorrect AI outputs.

Now, I know that might read like a critique of individuals, but let’s be honest about the business context. Your organisation is full of intelligent people operating under time pressure, cognitive fatigue, and an never shrinking mountain of decisions. Those are exactly the conditions under which cognitive surrender thrives. People under cognitive overload take an actual cognitive ability hit. And now they have access to a zero - cognitive - cost - infinite - capacity - plausible - answer - machine (ZCCICPAM).

Cognitive surrender is not a reflection of individual intelligence. It’s a predictable outcome of system design, time pressure, the pressures of performative work and ritual, and the absence of guardrails.

Time pressure makes it worse. Incentives help, but don’t fix it.

The researchers tested two real-world conditions:

Time pressure (Study 2) reduced overall accuracy and pushed people toward either gut instinct or AI adoption – but didn’t improve their ability to distinguish good AI from bad AI. Under time pressure, people who used the AI and got correct answers had the highest accuracy of any group. People who used the AI and got wrong answers had the lowest. The AI amplified whatever it touched.

Incentives and feedback (Study 3) helped. When people were paid for correct answers and given immediate feedback on whether they were right or wrong, override rates on faulty AI more than doubled. But even with money on the line and real-time correction, cognitive surrender persisted – the accuracy gap between correct and faulty AI conditions remained large.

If paying people and telling them when they’re wrong only partially solves the problem, what does that tell you about the “common sense” approach to AI governance?

What this means for your business

I’ve been talking to businesses about AI adoption for a while now, and the conversations tend to cluster around capability: what can AI do, how fast, at what cost? This research argues that the more important conversation is about cognition: what is AI doing to how your people think?

A few things I’d take away from this:

1. Policy isn’t enough, but it’s table stakes. If you don’t have clear guidelines on what data goes into AI tools and how AI outputs should be verified, you’re behind. This really isn’t optional anymore.

2. Training needs to go beyond “how to prompt”. Your people need to understand when to use AI, when to override it, and critically, how to recognise when they’ve stopped thinking critically. This is a metacognitive skill, not a technical one.

3. Feedback loops matter enormously. The study showed that feedback and incentives were the most effective intervention. If your team is using AI to draft reports, make recommendations, or inform decisions, build in review checkpoints. Create structures where AI outputs are scrutinised, not just consumed. When creation and publication of materials is cognitively free, you have the option to create friction to reduce negative habits, or reduce it to allow for cognitive space.

4. Beware the confidence effect. AI doesn’t just change what people think – it changes how confident they are. A team that is wrong and confident is more dangerous than a team that is wrong and uncertain, because the confident team won’t seek a second opinion.

5. Protect the cognitive muscle. This is the thread that connects a lot of what I’ve been writing about. If you outsource all the thinking, you lose the ability to think. The study’s finding that higher “need for cognition” and fluid intelligence buffered against surrender is a signal: the more you exercise your deliberative capacity, the better you are at knowing when to trust AI and when to trust yourself. Fix things. Engage your prefrontal cortex. Don’t let the muscle atrophy.

The uncomfortable question

The researchers frame this well: Tri-System Theory isn’t a warning about AI’s dangers. It’s a recognition that AI is now part of how we think. The question is whether we’re using it as a supplement to our reasoning, or as a substitute for it.

Every time someone on your team pastes a question into ChatGPT and copies the answer into a document without reading it critically, that’s cognitive surrender. Every time a manager asks AI to write a performance review and sends it unedited, that’s cognitive surrender. Every time a strategist asks AI for a recommendation and presents it to the board as their own analysis, that’s cognitive surrender.

And the uncomfortable part is: they’ll feel more confident doing it.

Human or Artificial - intelligence is not insurance, nor is it wisdom. Neither for that matter is AI. The question for leaders is not “how smart is your AI?” - AI is very, very smart. It’s “how much thinking is your team still doing?”

As I’ve said before, the answer isn’t to not deploy AI. AI can do amazing things. Experiment, iterate, compound learnings and go again. What the warning is (I think) is to ensure you don’t surrender your organisation’s cognitive capacity, in favour of its capacity to create plausible outputs. The unavoidable result of thinking less, is thinking less.

I’d love to hear your thoughts. Are you seeing cognitive surrender in your organisation? How are you building in the guardrails?

#AI #CognitiveSurrender #Leadership #CriticalThinking #SystemsThinking #AIGovernance #DecisionMaking #TriSystemTheory #ShadowAI